Goal:

Click-Opps is old school! Have you ever thought what it be like to get out of the console & use CLI to create a Lambda function! During this we’ll check CloudWatch to see whats going on!

Lessons Learned:

- Create Lambda function using AWS CLI

- Check CloudWatch logs

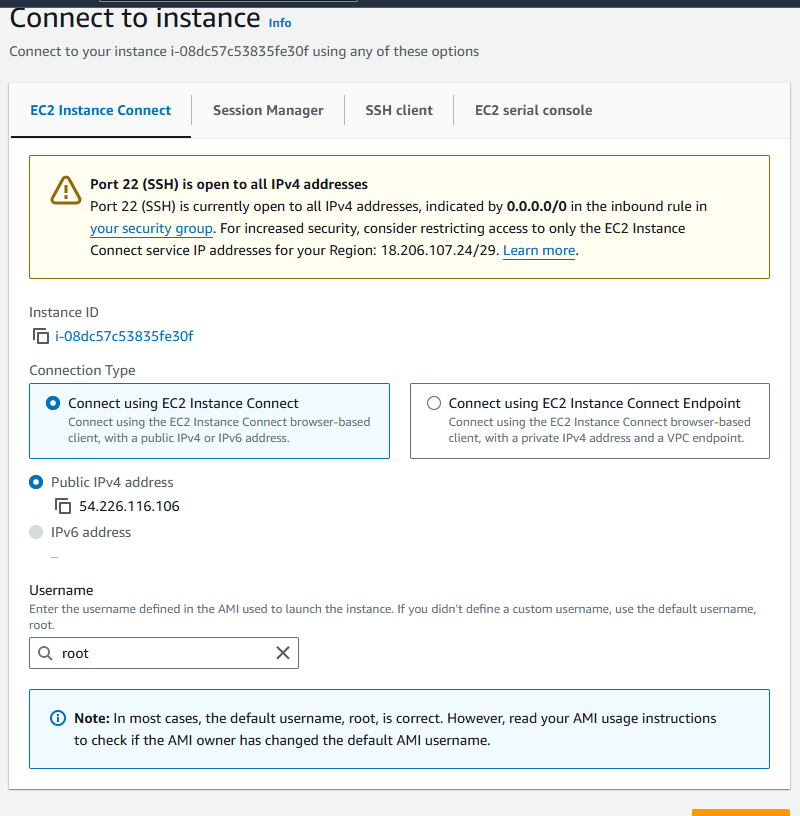

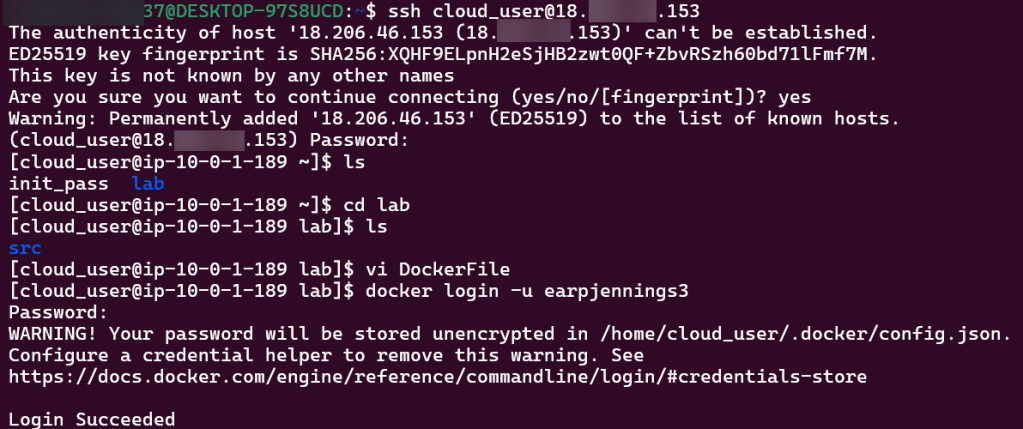

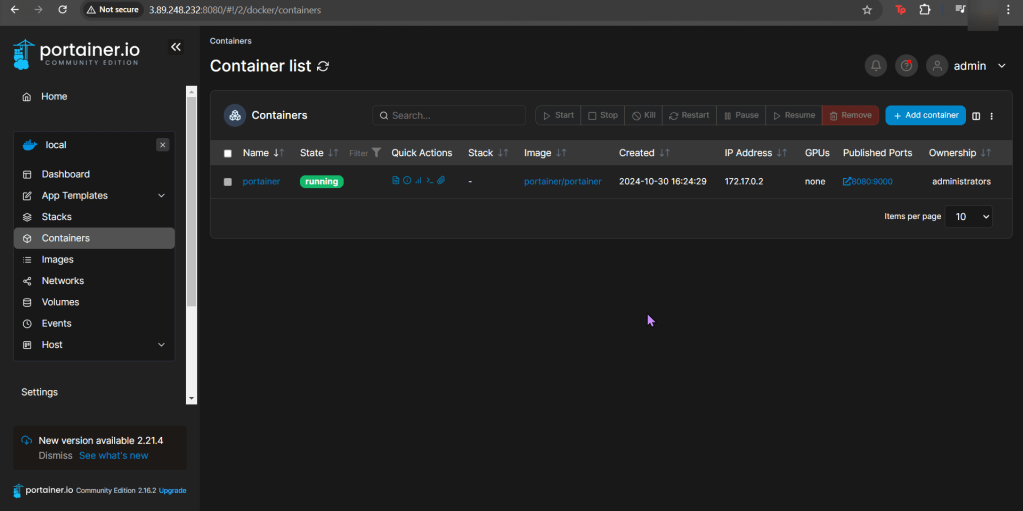

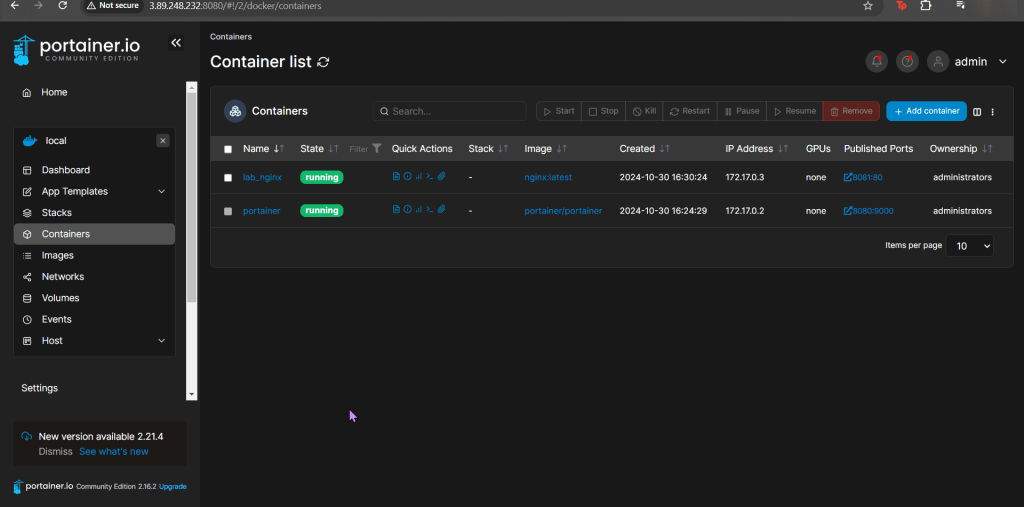

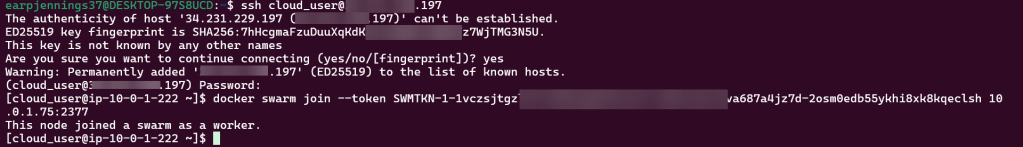

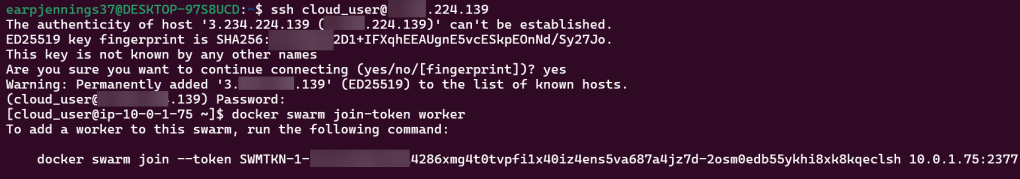

SSH:

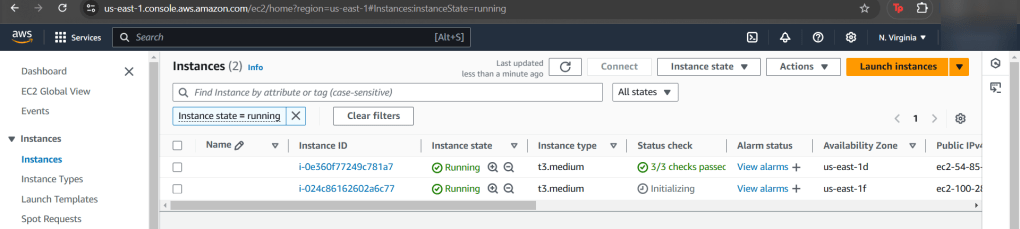

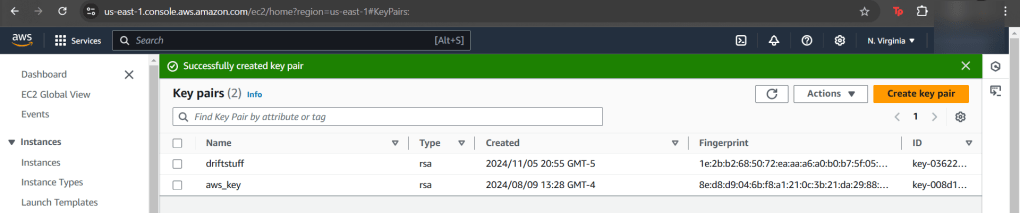

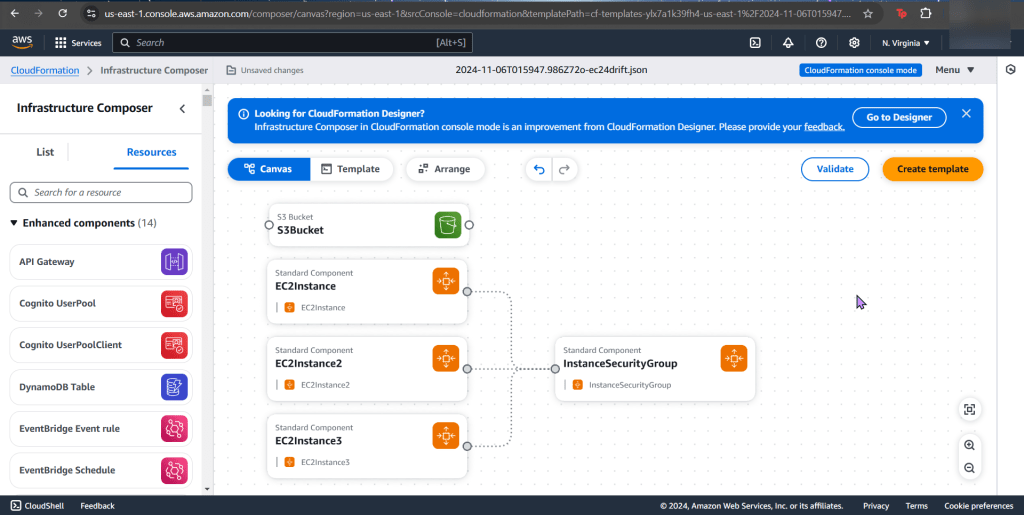

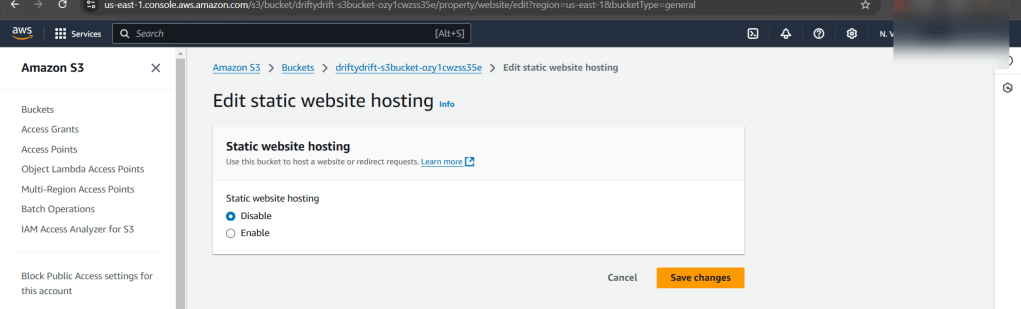

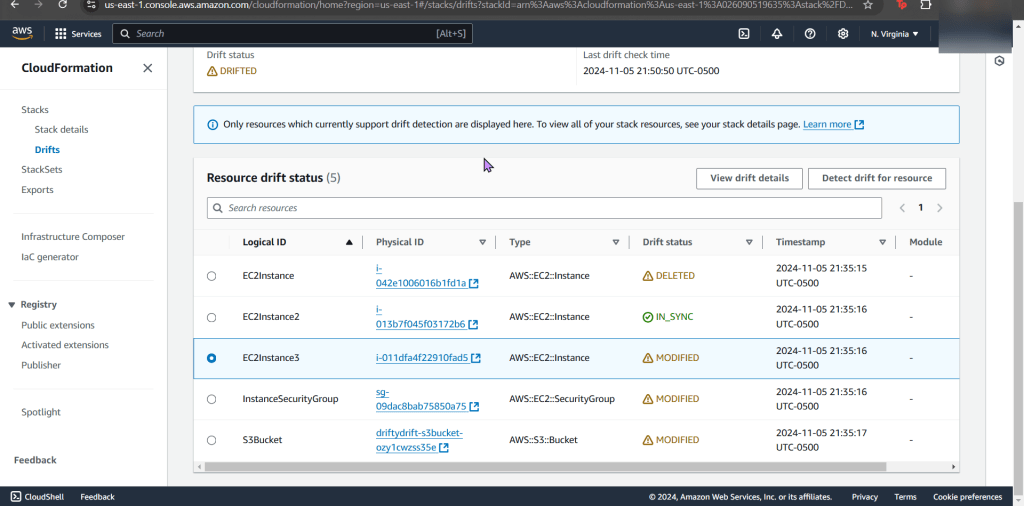

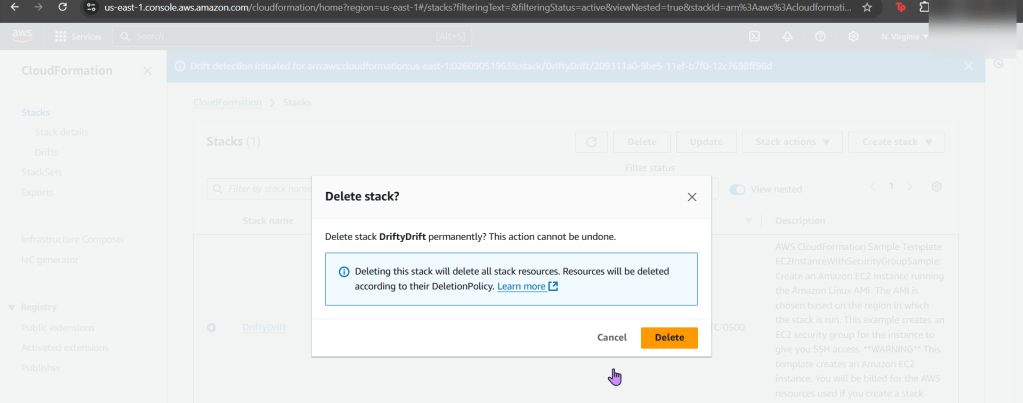

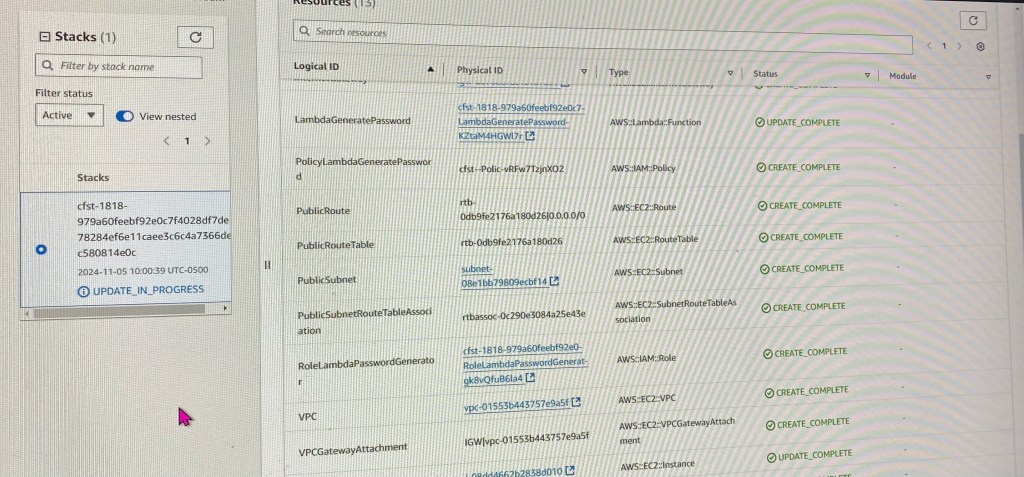

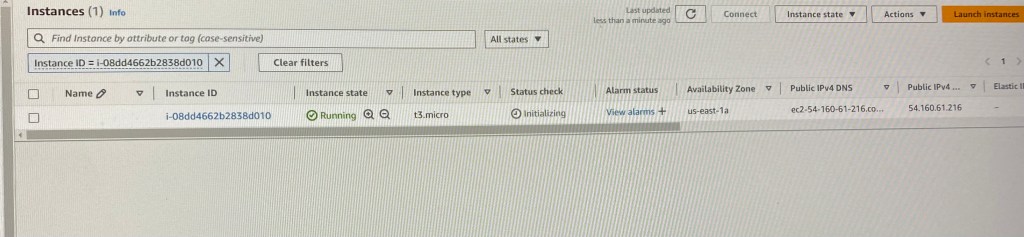

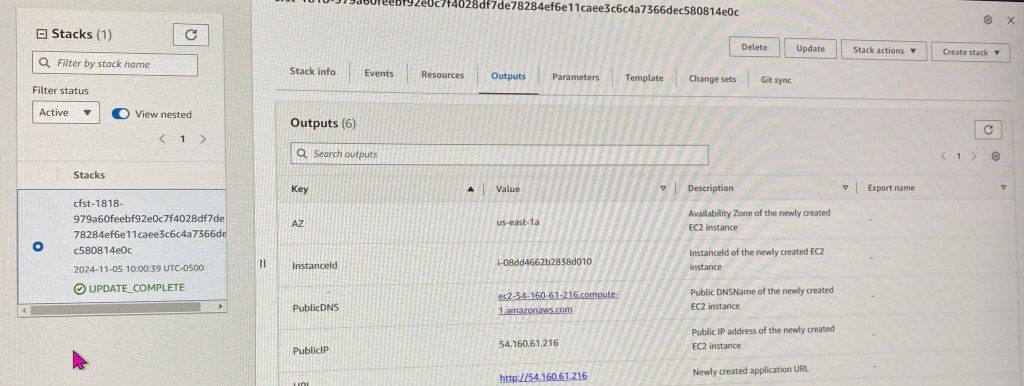

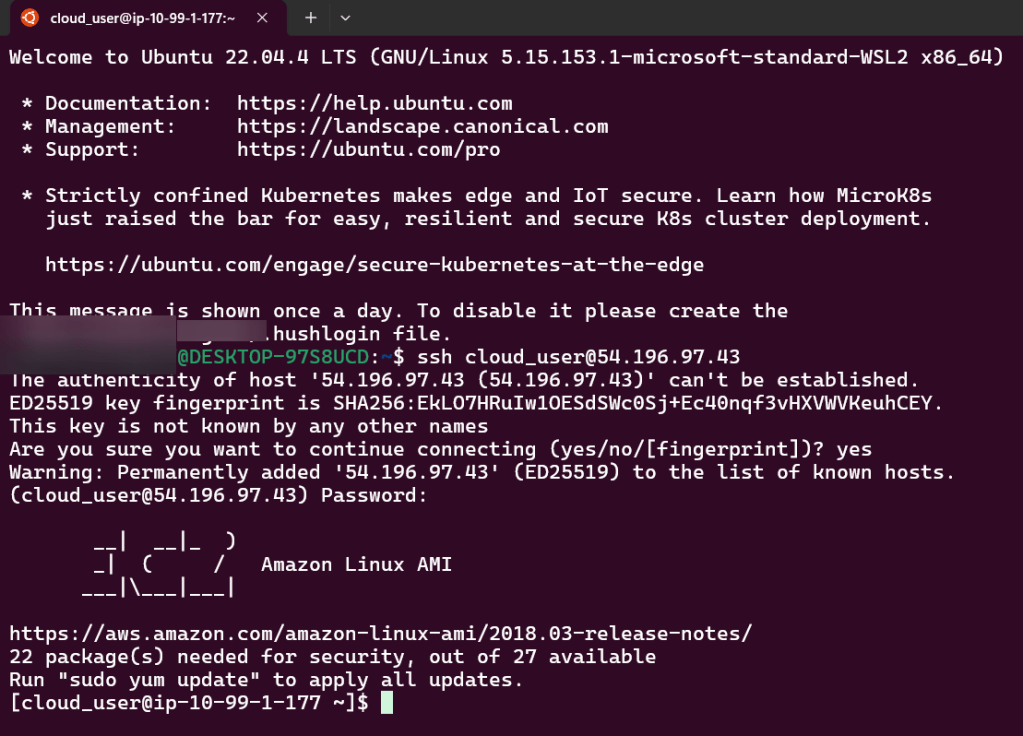

- Create 2 S3 buckets & EC2, after that utilize IP address for SSH login

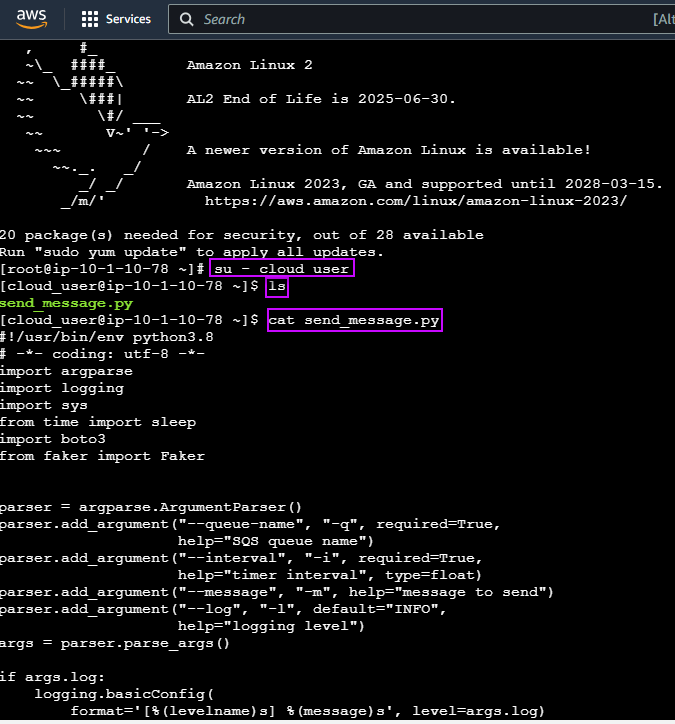

- To ensure AWS is installed properly, conduct the following commands

- aws help

- aws lambda help

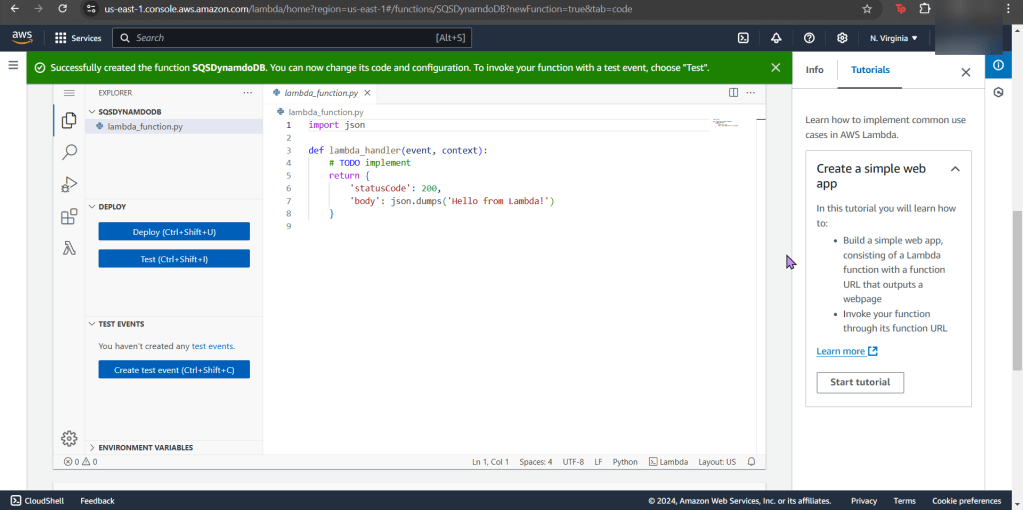

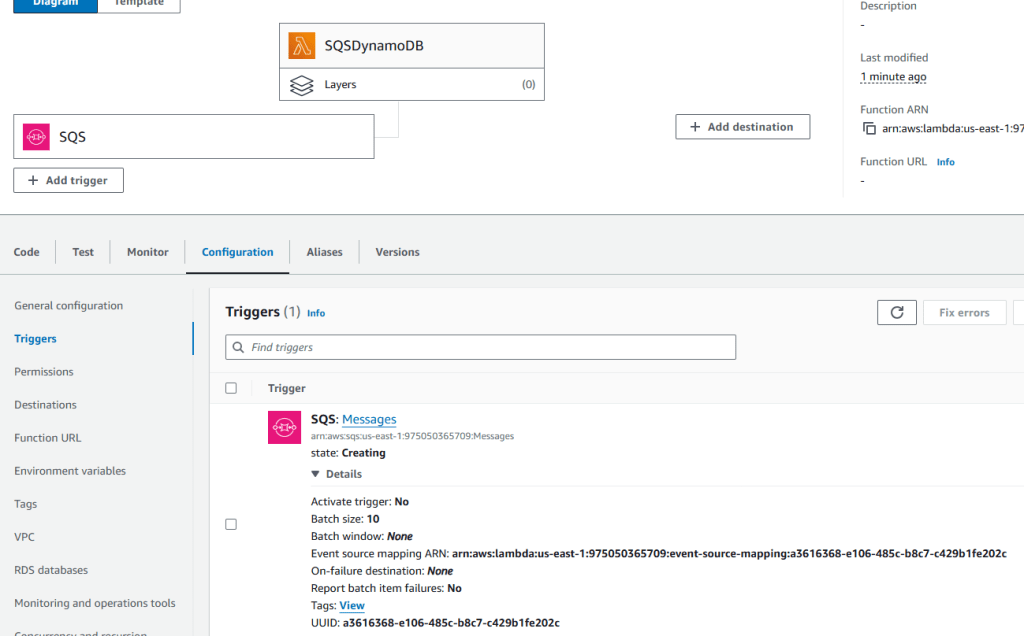

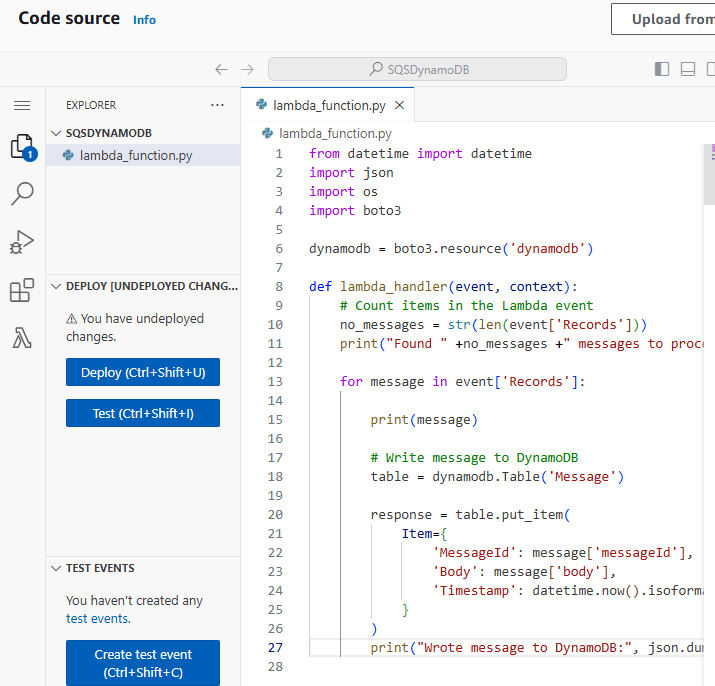

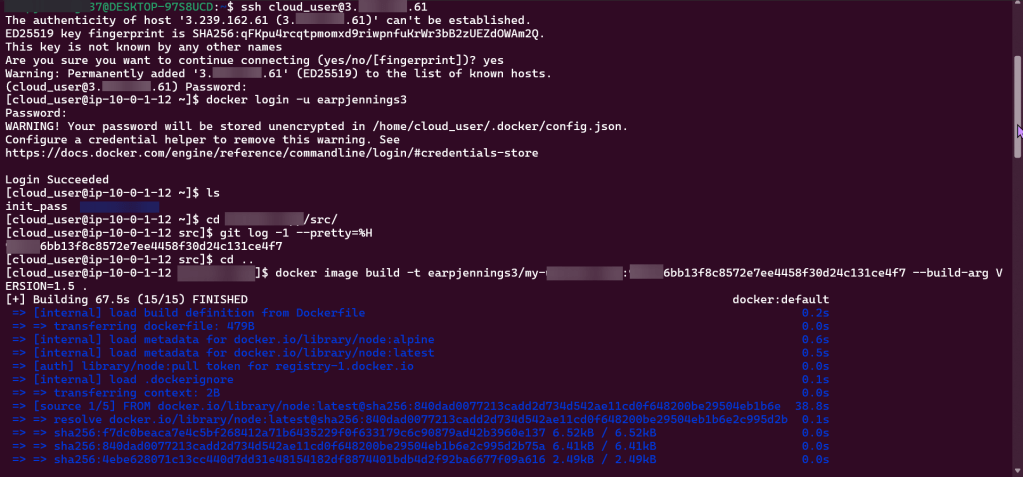

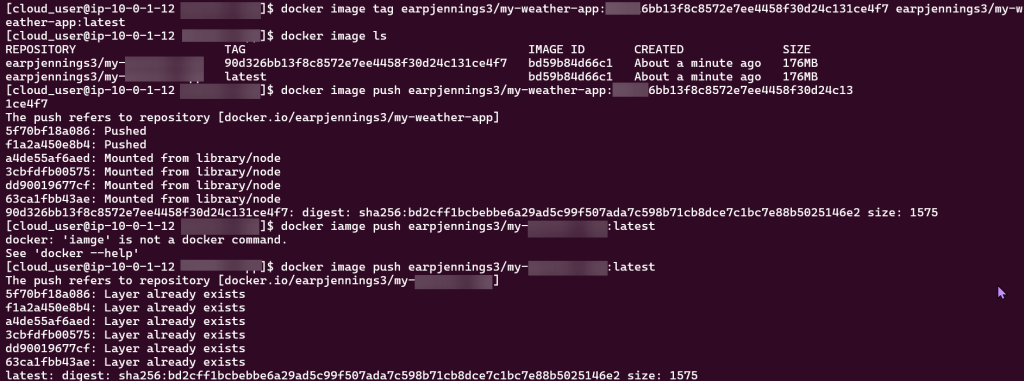

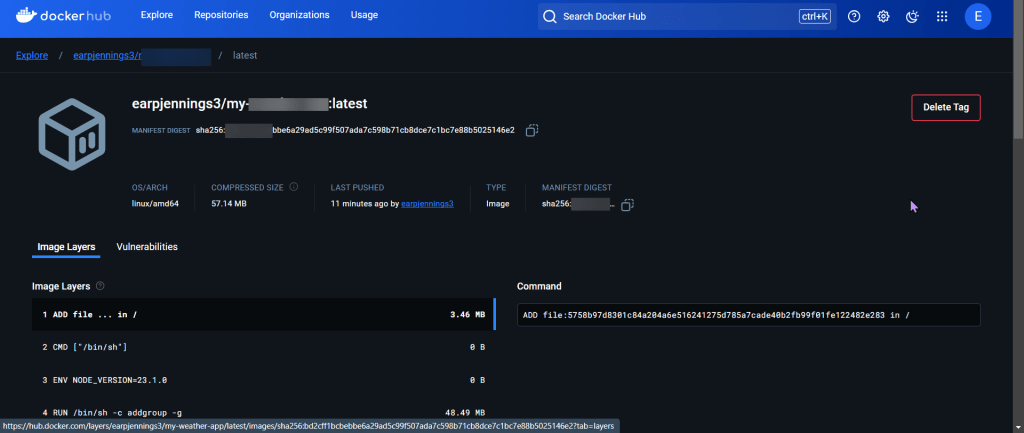

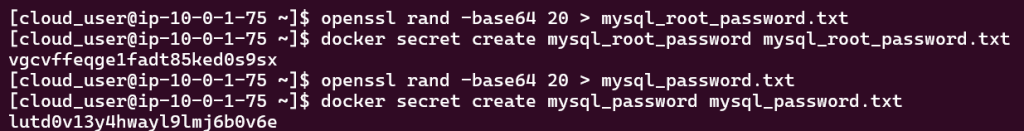

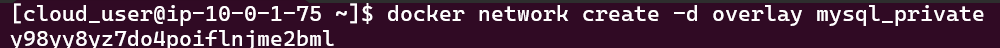

Create & Invoke Function using AWS CLI:

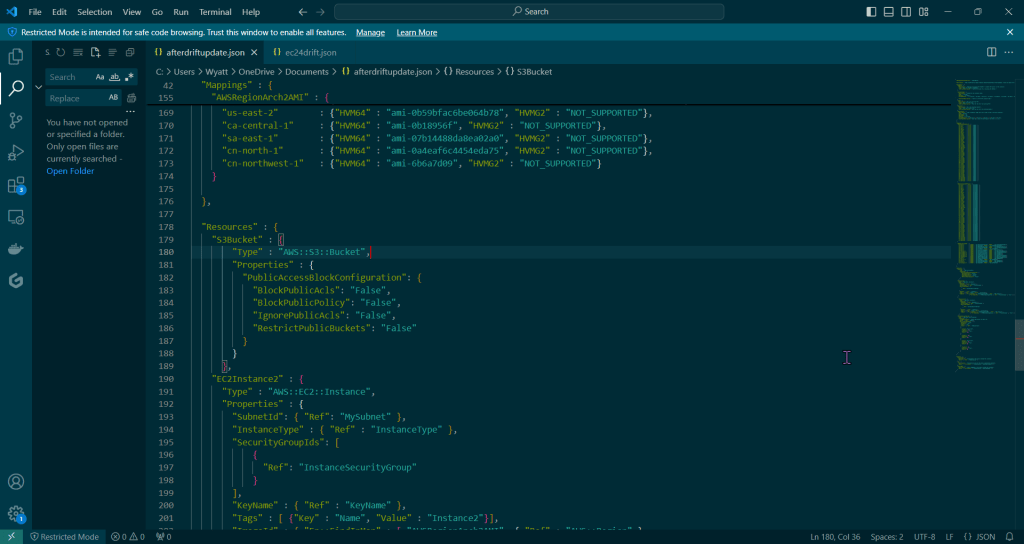

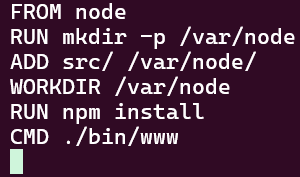

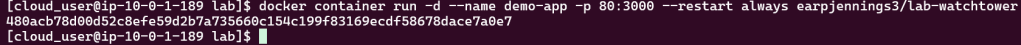

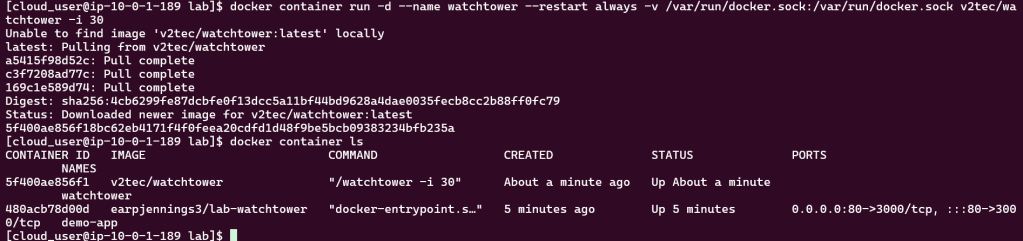

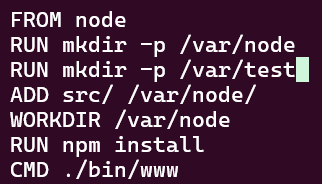

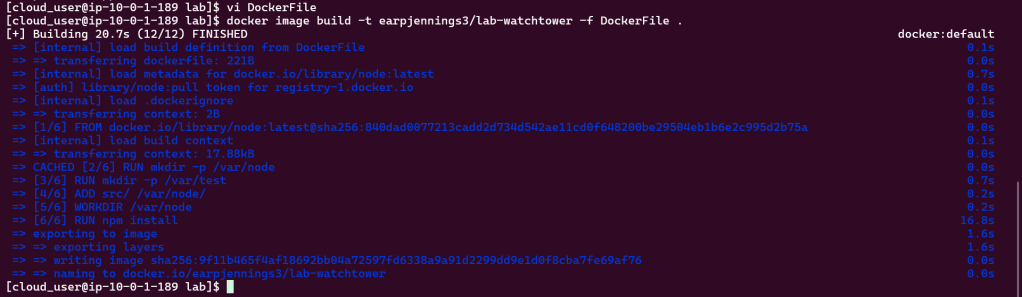

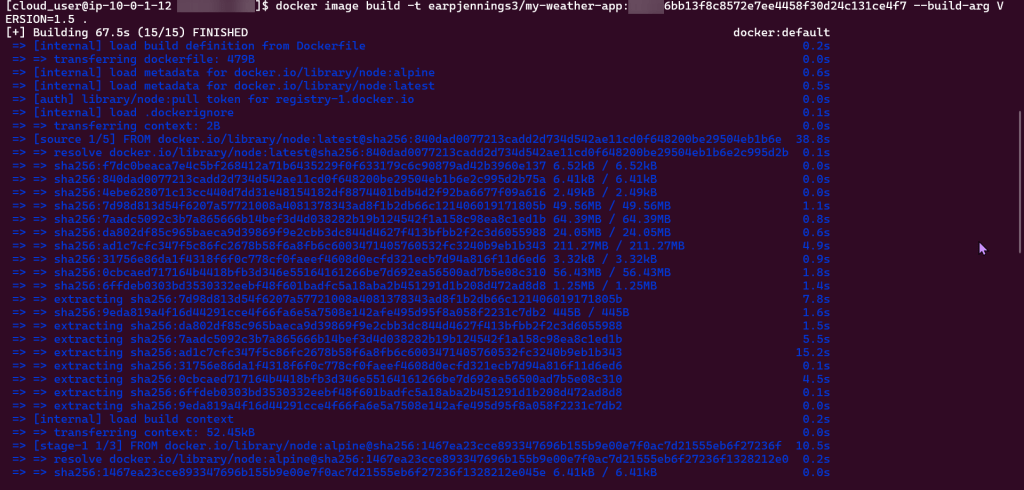

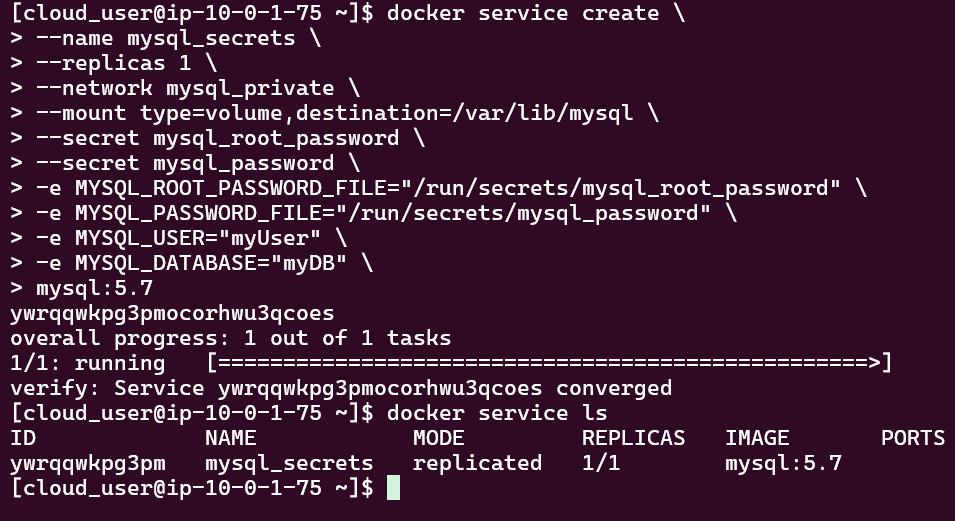

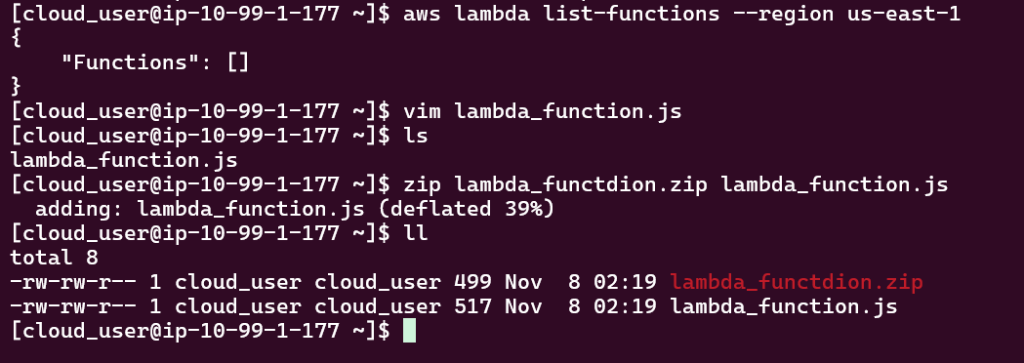

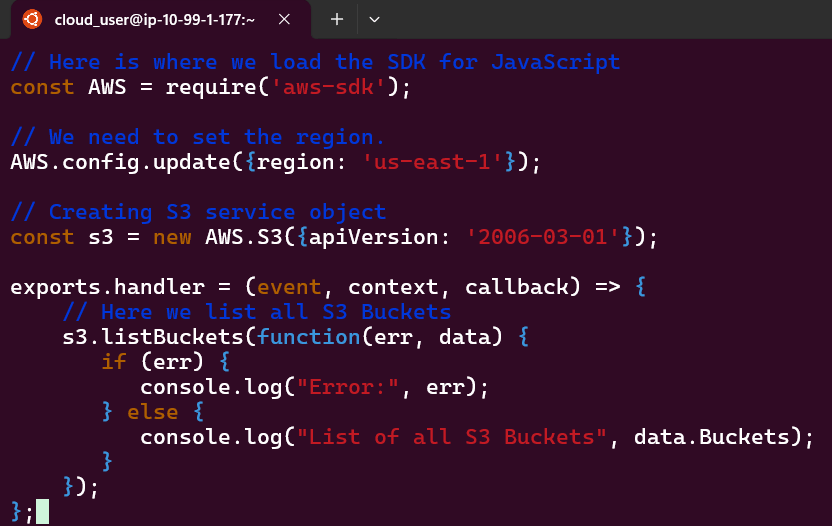

- After ensuring you lambda can be located in the S3 bucket region you located it, vim the file, next zip it.

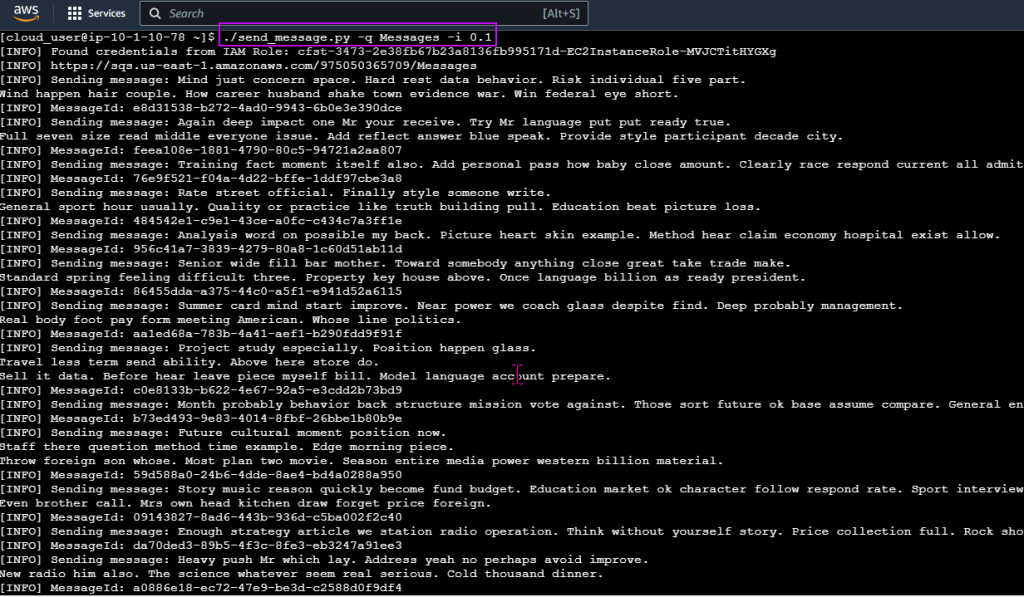

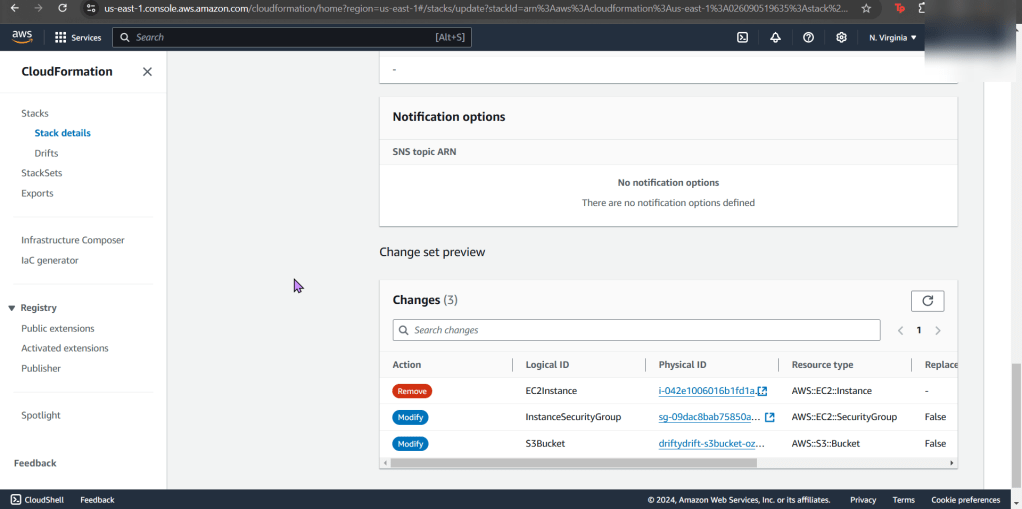

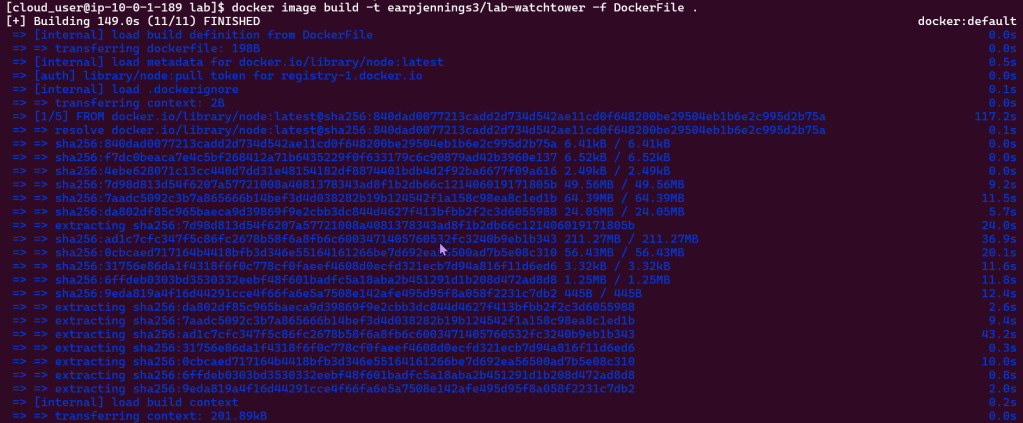

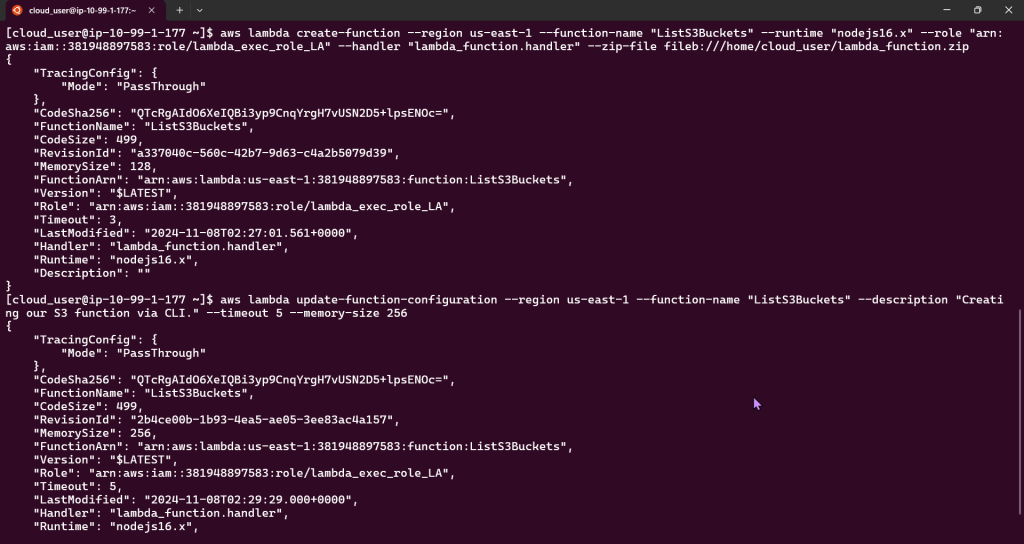

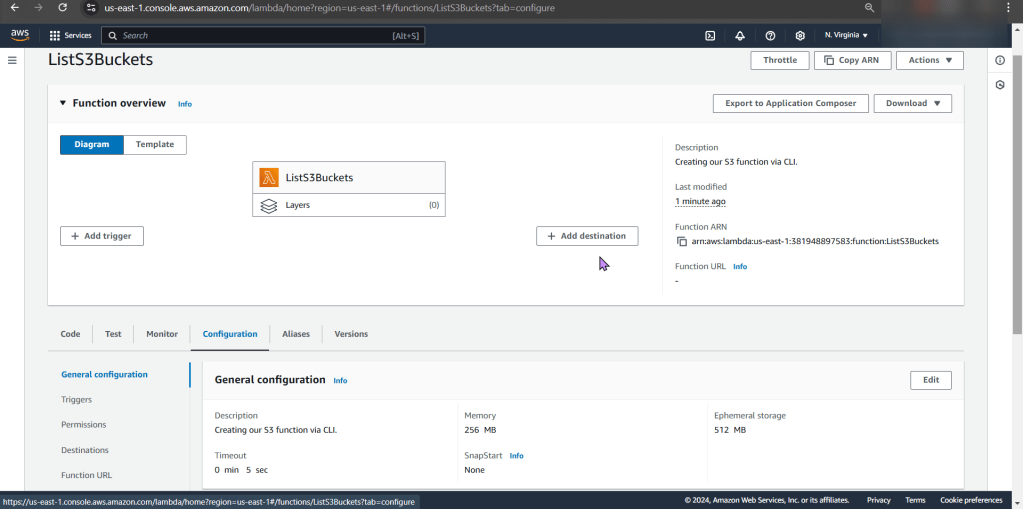

- Create & Update your function

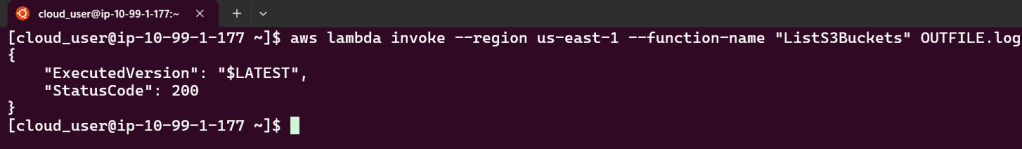

- Invoke your function

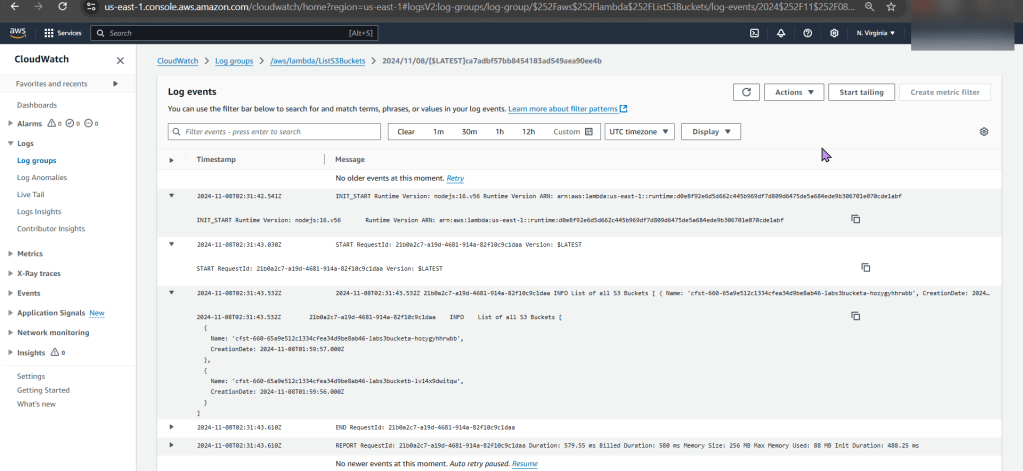

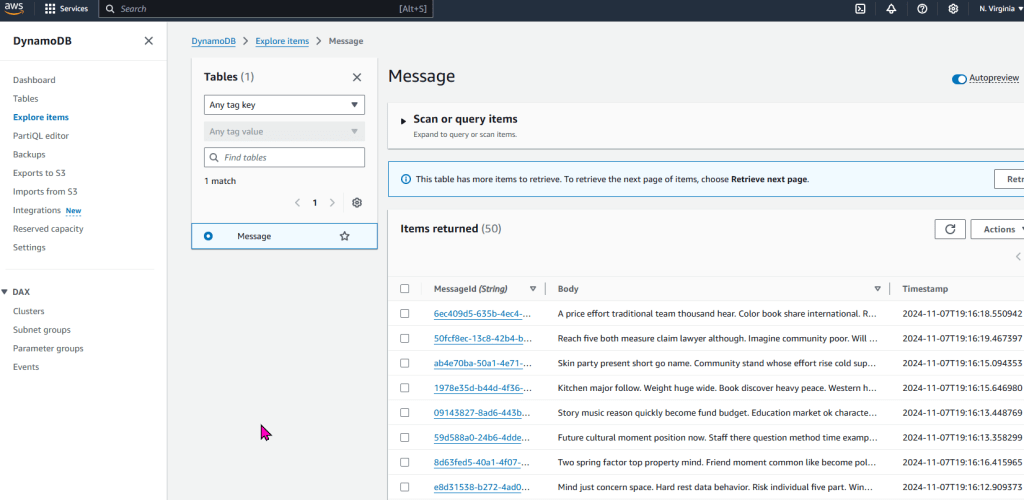

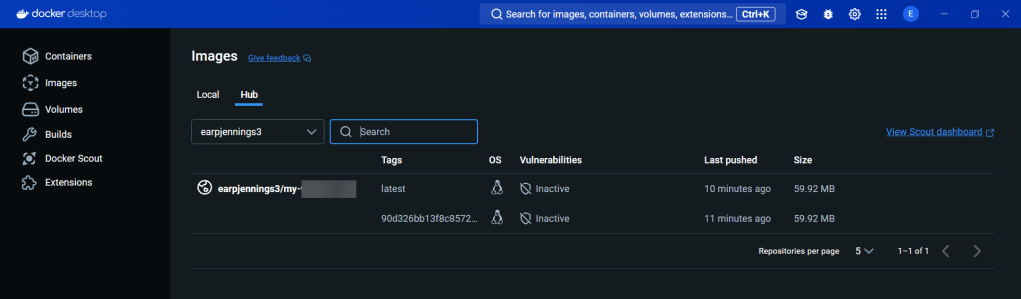

Check CloudWatch Logs:

- Wallah, alas.